colmine.github.io

Personal work page

Project maintained by Colmine Hosted on GitHub Pages — Theme by mattgraham

Neural Networks Blog Page

Cole G. Gilmore

Overview

Neural Networks, sounds simple to most of us, it’s what our brains essentially are, right? Well, yes and no. Yes, a neural network is something that is modeled after our brains and the neuron connections within them. No to the point that our brains are a neural network, they are far more complex than that. However, that is a good base line to start from when trying to figure out just what a neural network is and how deep learning ties into it. Truthfully, neural networks are simply an incredibly complex math equation that gives weighting to certain things over others. Knowing that, let’s take a brief look at where and when neural networks were first put into use. Firstly, neural networks were first proposed back in 1944 by Warren McCullough, and Walter Pitts, both of whom were researchers at the University of Chicago, and both of whom later moved to MIT in 1952 to later found the first cognitive science department. This department would later evolve into what we know commonly as the MIT Artificial Intelligence Laboratory. However, the department would experience a few lulls in its journey, as with all computer science, someone would stumble upon the idea and consider it a revolutionary field and then reach their limits with the technology and ditch the idea as a whole. Only for the idea to be rediscovered about ten years later by another computer scientist, and then rinse and repeat ad nauseam. With that out of the way, there are countless different avenues to take when discussing neural networks and deep learning, but the focus will remain on what is a neural network, and how does deep learning impact a neural network.

Neural Networks

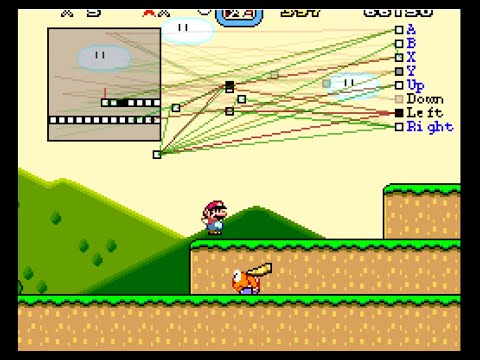

So, to begin, what is a neuron in the sense of a computer’s perspective, and how do they all connect to simulate our own brains’ functions? Well, a neuron is essentially just a variable that holds a value, generally from zero up to one, so each neuron holds a probability. Where, if you are to pass the network an image where you’re trying to determine if the image is showing a letter A then for every pixel in that image that is white would get a value of 0.0, whereas a pixel of the image that is starting to grey, or is black, will get a value of something from 0.1-1.0. This value is called the activation of the neuron, as there is generally an activation function tied to each neuron to determine exactly when to activate and apply a value to the neuron. Now, imagine you have a ten pixel by ten-pixel image set that you’re trying to train a neural network on, that means you will have one neuron tied to each pixel in that image. That means you have one-hundred neurons just for the initial processing of an incredibly small 10x10 image. Those one-hundred neurons will make up the first layer of the neural network as they will be the initial processing layer. Passing the image(s) through this layer will assign a value to the result, generally determining whether the image shown to it was an A, or another letter from the alphabet. There are a lot more steps to determining, accurately, whether the image passed to the neural network was an A, or not, than just the first layer however that requires more in-depth discussion; which, might be touched upon at a later point in this posting. Just to give a brief overview of the extra processes that occur in a neural network, there are things called “hidden layers”. These layers determine their activation of the neuron, i.e. assigning a new value/weighting to the neuron, based upon the activation of the neuron from the previous layer. This can become very complex very quickly, and is well beyond my expertise for explanation, and I suggest you go and read up on it from one of the resources at the end of this posting if you’re interested.

Why Layering Works

You might be thinking, why are there “hidden layers” in my program? How do they even help my program? Well, when we, as humans, recognize something we usually do so in segments. When you see the letter B, you see a single straight line, with two humps attached to it; or when you see a Z, you see two horizontal straight lines, and a diagonal line connecting those two lines from one line’s end point to the other line’s starting point. These segmentations are generally what you would want to see in the last “hidden layer” of a neural network as they correspond to all the possible components of what makes up a drawn letter from the English alphabet. Each of these segments should have a weight attached to them that allows them to piece together what their next activation function should be and should give you at least a semi-accurate answer on what the network thinks the image that was passed to it is, a B or a Z. That isn’t to say that neural networks are only good with image detection, there are many ways that neural networks can prove useful in modern computation tasks. Take the idea of breaking an image of a hand drawn letter down into segments, how useful do you think that simple idea of breaking something down into segments that, when put together, form a more accurate representation of the whole? It quickly becomes applicable to many different tasks, right? The answer is yes, yes it does. Surprisingly so at that, take a problem of face detection as an example. You can break a face down into key characteristics and then fill in the rest with accurate guesses and get an incredibly high chance of accuracy in determining the face that you’re looking at. Just a couple of the key features that are present in a face, the eyebrows, hairline, hair type, mouth structure, lips, nose, eye color, eye shape, jaw line, etc. You can see the road this goes down, I hope. Once you’ve defined all of the key structures, or segments, you want the network to actually look for it just becomes a matter of determining how the activation function should work to eventually piece all of those segments back together to get an accurate guess at the end of the process. Well, just keep in mind that if there are one-hundred neurons in your initial layer, and you chose to have two layers of thirty-two neurons in two hidden layers, and a final twenty six neurons to determine the letter, you’d have over five-thousand individual knobs to tweak to get your desired outcome. Now, how does deep learning tie into all of this?

Deep Learning And Why It’s Important

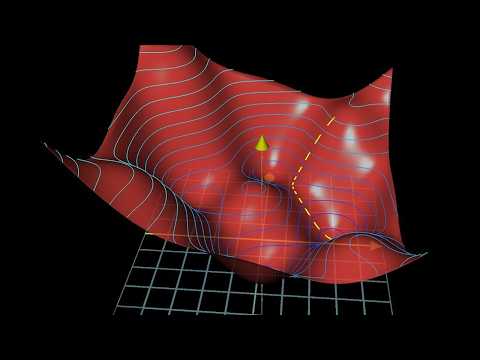

Before I can discuss deep learning you need to know about gradient descent. Gradient descent is an algorithmic approach of trying to find the highest, or lowest depending on your approach, value that fits your expectations. If you were looking for the most inaccurate image in a large data set of images, you’d want the images that scored at the bottom of the curve. Well, finding that bottom, or top, can be incredibly tricky. What if your curve that determines the accuracy has multiple humps in it? Well, you still want to find the highest, or lowest, point in the curve, but to save computational resources you’re only checking nearby references and not the entire curve, that’s gradient descent. Now you’ve found the ‘highest’ point in the curve, but if you moved a little more to the right you’d hit a dip in the curve but just a little past that dip you find an even higher point, this is the downside to basic gradient descent and the driving factor to determining a better version of gradient descent. This is the issue presented by neural networks that deep learning tries to remedy. Deep learning is the process of trying to accurately assign weights and scores across many stages of a neural network. You don’t just stop at the first point; you try and see if there are better options continuously until you find the best possible route. There are many different approaches to deep learning, one is supervising the learning of the algorithm and tweaking weights as you go and making edits to the code itself. Another is unsupervised learning, where you simply let the network go and see what it comes up with. Within these two types of learning there is also another genre of feedforward (acyclic) and recurrent (cyclic) learning that determines just how you setup your activation function and how you pass information back to the network. Recurrent networks are some of the most computationally impressive networks out there as they can process programs and mix sequential and parallel information processing in a seemingly natural and efficient manner. These networks are viewed as the holy grail to make up for the decline in actual computational power observed over the past century as they’re able to process information in a seemingly infinite parallel process. To dive a bit deeper on the topic it’s important to note that deep learning isn’t just a certain section of neural networks, but rather a massive chunk that has many different subsections within itself. Deep learning at its roots is facilitating the credit assignment to a given neuron under certain assumptions, such as backpropagation, and determining a better outcome based off the outcome that was determined from a previous neuron’s activation. I know, it becomes very complex and confusing the further you go. However, once you have a good understanding of the roots you can easily apply it to almost any network you find out in the wild. So, you have a basic understanding gradient descent and a very basic understanding of some of the different types of deep learning. How do you put it all together now? Well, the answer is even more confusing code. That code comes in the form of cost functions. Cost functions are vital to any large scale deep learning neural network, as it helps train the network whenever it gets a wrong guess. How does it do that you might ask? Through back propagation of course! I know, it just gets worse the further you dive, but have faith for there is light at the end of the tunnel! When you pass your image into the neural network and it passes through all your hidden layers and gives you its guess you see that it guessed B, but the image was a D. Darn! Well, through backpropagation and multiple epochs of training your network will look at that false result and see, “Darn! I made a mistake! That should have been a D!” Through this error, your network will begin to see its mistakes and adjust the weights of segments accordingly, until you have a competent network for determining letters of the alphabet. This is just a very brief overview on the subject as it’s incredibly interesting, and I encourage you to look at the resources for more information as most go into far more depth and explanation than I can here.

References And Resources

(Very intersting research paper on neural networks, primary resource for posting!)

Schmidhuber, Jürgen (2015). Deep learning in neural networks: An overview. Neural Networks, 61(), 85–117. doi:10.1016/j.neunet.2014.09.003

Deep Learning and Neural Networks

What is a neural network? (Lots of math)

How does a neural network determine accuracy? (Also a lot of math)